How to Optimize SEO Automation for Maximum Traffic: A Framework That Actually Works

Manual SEO at scale is genuinely brutal. Auditing hundreds of pages, tracking keyword positions across dozens of clusters, publishing consistent content week after week, and fixing technical issues before they affect rankings — even the sharpest marketing teams hit a productivity ceiling well before they run out of opportunities to pursue. The ceiling is not a talent problem. It is a leverage problem.

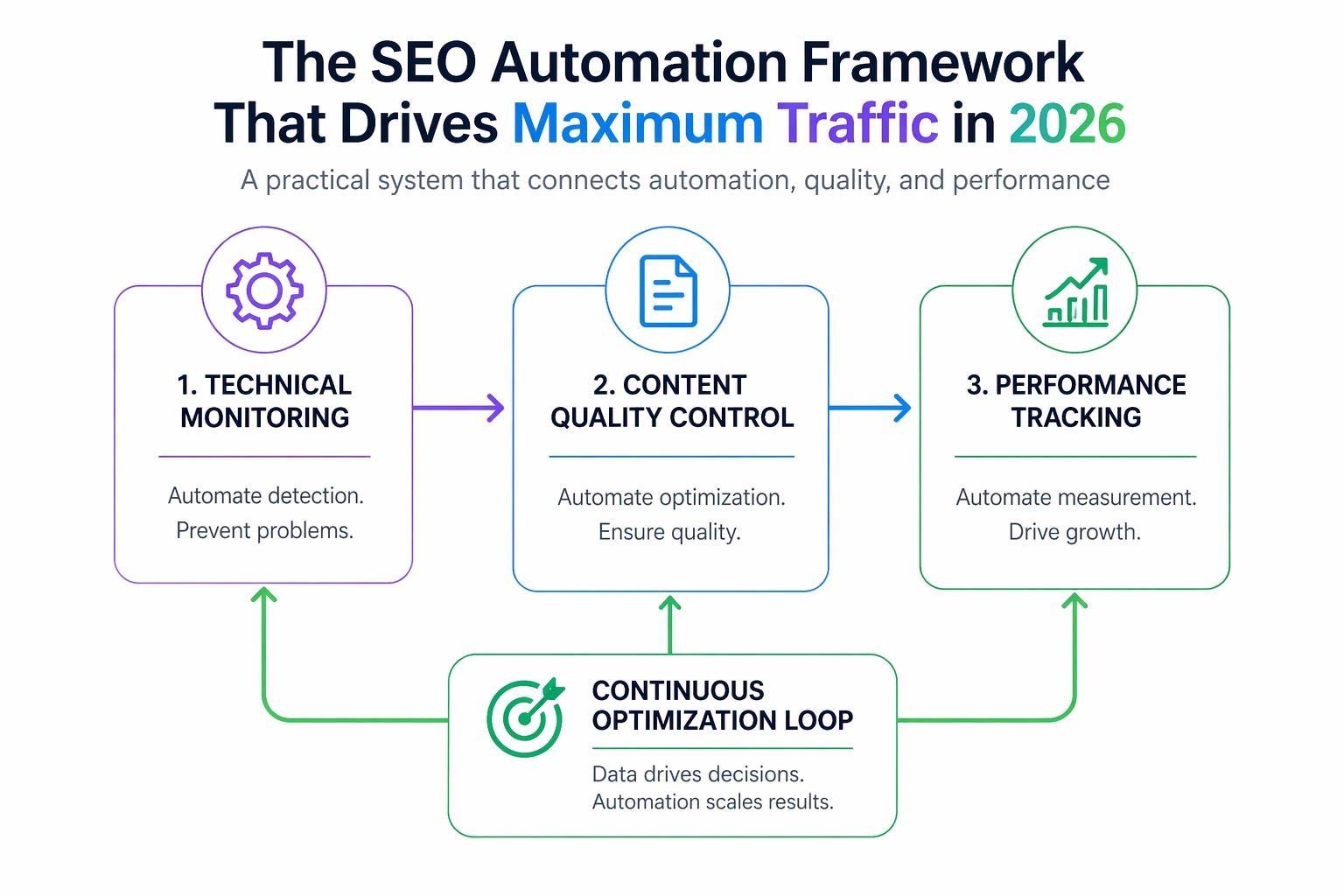

The data is clear on what the right automation framework delivers. Automated technical SEO fixes improved impressions by 56.4% across nearly 39,000 sites while cutting critical site issues by 85%. That kind of lift does not come from handing everything to a script. It comes from knowing precisely which tasks are safe to automate, which require human oversight, and how to wire the whole system together so it keeps improving over time rather than quietly drifting off course.

This guide walks through that framework in practical terms: what to automate, how to choose and integrate tools without creating integration chaos, how to apply automation to content without triggering quality penalties, and how to monitor the whole operation so your gains compound rather than erode.

The Single Most Important Decision in SEO Automation

Before any tool is selected or any workflow is built, the most important decision you will make in SEO automation is drawing the line between work that belongs to machines and work that belongs to humans. Get this wrong and you will either under-automate and stay stuck at the same output ceiling, or over-automate and produce technically correct content that no one wants to read, which creates quality penalties that undo your gains faster than you can rebuild them.

The work that automation handles reliably includes technical site audits, keyword data aggregation, rank tracking and alert generation, reporting and dashboard compilation, and meta tag generation at scale. These tasks share a common characteristic: they follow defined rules, their quality can be objectively measured, and their output does not depend on understanding what a real person actually finds useful or interesting.

The work that must stay human includes content strategy, E-E-A-T signals, creative angles and tone, and relationship-dependent activities like link building outreach. These tasks require the kind of judgment that AI systems apply inconsistently: understanding your specific audience, recognizing when a technically correct answer is not actually the most helpful one, and producing content that reflects genuine expertise rather than pattern-matched language from training data.

The most expensive mistake teams make is over-automating into the human-dependent category. Publishing AI-generated content without editorial review, running keyword insertion scripts that trigger spam signals, and using bulk redirect tools without checking destination quality are all examples of automation applied where human judgment was required. The consequences tend to be domain quality signals degrading slowly over months, which is harder to diagnose and slower to recover from than a sharp algorithmic penalty.

Choosing Tools Without Creating Integration Nightmares

Once you know what to automate, the next challenge is selecting tools that cover your actual bottlenecks without requiring a full engineering team to keep them connected. Most teams overthink tool selection. The goal is not to have every available feature. The goal is to have coverage across the tasks that are consuming disproportionate manual hours, with those tools connected to each other so information flows without constant human facilitation.

The core coverage you need for effective SEO automation falls into four areas. Technical auditing handles crawling and issue detection, surfacing problems like broken links, redirect chains, missing tags, and Core Web Vitals failures before they affect rankings. Monitoring and alerts handle uptime and ranking changes, configured to notify the right person when the right threshold is crossed. Content tools handle draft generation, optimization scoring against top-ranking pages, and readability analysis. Reporting handles dashboard compilation and automated delivery so your team spends time interpreting data rather than assembling it.

The setup sequence that works reliably starts with connecting your site to Search Console and configuring crawl error alerts. Schedule automated crawls on a weekly cadence using your technical audit tool, checking for the highest-impact issue categories. Configure rank tracking for your most important keywords with threshold-based alerts so drops register before they become traffic problems. Integrate your audit findings into a centralized reporting dashboard so the information is available without manual compilation.

One practical note on alert configuration: start with conservative thresholds. Teams that configure highly sensitive alerts in the first week end up ignoring them by the second week because the signal-to-noise ratio is too low. Fewer, higher-quality notifications that represent genuine action items beat a constantly updating inbox that trains your team to filter everything out. The full feature set in FluxSERP is designed around this principle, with alerts and monitoring configured to surface what matters rather than everything.

Applying Automation to Content Without Getting Penalized

Content is where the stakes in SEO automation are highest, and where the failure modes are most consequential. The good news is that there is a clear line between the parts of content work that automation handles well and the parts that require human judgment.

Automation handles topic ideation based on keyword gaps and competitor analysis reliably. It handles content brief generation with target keywords and structure. It handles optimization scoring against top-ranking pages and readability analysis. It handles internal link suggestions based on semantic relevance and meta description drafts for bulk page updates.

The parts of content work that require a human in the loop include the final editorial review before publishing, adding first-hand experience and original insights that differentiate your content from everything else in the SERP, adjusting tone for your specific audience, fact-checking all statistics and claims, and ensuring E-E-A-T signals like author credentials and sourcing are present and accurate.

The practical payoff from this hybrid model is meaningful. AI-generated drafts cut production time significantly and reduce time-to-publish when paired with human editing. But those gains only hold when the human editing layer is actually doing its job rather than rubber-stamping whatever the AI produced. The way to think about this is that AI handles the structure and volume while humans handle the substance and judgment. When either layer is missing, the output quality degrades in ways that are hard to catch in the short term and expensive to recover from in the medium term.

Before publishing any AI-generated content, run it through a simple quality check: does this add a perspective that is not already available in the top results? Does it include a real example, original data, or specific insight that comes from actual expertise? If the answer to both is no, the content is thin content waiting to be penalized, regardless of its keyword optimization score. The FluxSERP AI SEO tool is built to flag this kind of issue before content goes live, not after.

Monitoring Your Automation So Gains Compound Instead of Erode

The most common failure mode in SEO automation is not a bad tool choice or a poorly designed workflow. It is treating automation as a one-time setup rather than an ongoing system. Teams that automate, stop watching, and assume everything is running fine are the ones who eventually discover six months of gradual domain quality degradation with no clear cause.

Building a monitoring layer that keeps your automation honest requires a performance dashboard that connects Search Console and GA4 into a centralized view, weekly automated reporting for traffic, clicks, impressions, and average position changes, threshold-based alerts for traffic drops that exceed a meaningful week-over-week percentage, a technical health score that updates after every automated crawl, and a scheduled monthly review where you evaluate what your automation is actually producing versus what it was designed to produce.

The alerts matter as much as the dashboard. The most important ones to configure are crawl errors and 4xx spikes, Core Web Vitals failures on your highest-traffic pages, new ranking opportunities entering the top 20 for monitored keywords, drops in indexed page count, and changes in backlink profile quality. These are the signals that indicate your automation is either working or has drifted into producing something you did not intend.

The broader principle here is treating your automation setup like a product rather than a deployment. Products get reviewed, updated, and improved based on real feedback. Automation that is deployed and never revisited becomes a liability as search behavior evolves, algorithm priorities shift, and the gaps between what your automation was configured for and what the search landscape actually rewards grow wider.

The FluxSERP SEO toolkits are structured around this continuous monitoring model, providing the feedback loops that tell you whether your automation is compounding your gains or quietly working against them.

What Happens When Automation Runs Without Checkpoints

The pattern of what goes wrong when automation runs without human checkpoints is consistent enough to describe clearly. One variant involves scaling programmatic content to large page counts without quality review, which produces crawl budget waste and domain quality degradation over months rather than weeks, making the cause hard to identify when rankings eventually decline. Another involves configuring technical automation that keeps applying the same rules long after those rules stopped being appropriate for how the site evolved, which produces accumulated technical debt that requires a full audit to untangle.

The lesson is not that automation causes these problems. Manual SEO causes the same problems at slower speeds. The lesson is that automation scales both your wins and your mistakes at the same rate, which means the oversight and review layer needs to match the scale of the automation rather than staying the same size it was when the team was doing everything manually.

Smart automation is iterative. You build it, measure what it produces, refine based on what you find, and repeat. The teams that get compounding results from SEO automation are not the ones who built the most sophisticated initial setup. They are the ones who stayed engaged with what the setup was producing and kept improving it based on real data. That engagement is what the FluxSERP platform is designed to support, giving your team the visibility it needs to stay ahead of drift rather than catching up to it after the fact.

Frequently Asked Questions

Which SEO tasks should never be fully automated?

Tasks requiring creative strategy, E-E-A-T signals, and genuine understanding of user intent should always involve a human. This includes content strategy decisions, editorial review, tone and voice calibration, fact-checking, and any content that draws on first-hand experience or original expertise. Over-automating these tasks produces content that is technically optimized but substantively thin, which triggers quality evaluation systems that are increasingly effective at identifying it.

What is the biggest risk of over-automating SEO?

The biggest risk is thin content at scale producing domain quality degradation that is slow to diagnose and slow to recover from. Unlike a sharp algorithmic penalty that produces a clear signal, quality degradation from over-automation tends to manifest as gradual ranking erosion across many pages simultaneously, which can take months to fully surface and months more to reverse once identified. The early warning sign is typically engagement metrics declining before ranking metrics do.

How should alert thresholds be configured in SEO monitoring?

Start conservative and tighten over time based on real signal-to-noise ratios. A team that receives twenty notifications on the first day will ignore them by the third day. Begin with threshold settings that only trigger for genuine high-priority issues and adjust based on whether the alerts you receive are producing action or producing noise.

How long before SEO automation improvements show up in traffic?

Technical SEO automation typically produces measurable results within 30 to 60 days because improving crawlability and fixing structural issues has relatively direct effects on how content is indexed and ranked. Content automation takes longer because new pages need to be crawled, indexed, and accumulate engagement signals before ranking effects become visible. Most teams see meaningful traffic movement within 60 to 90 days of deploying content automation with quality controls in place.

What does an effective SEO automation review cycle look like?

Monthly is the right cadence for reviewing your automation scope and confirming that your rules and configurations still match current search behavior and site goals. Quarterly is the right cadence for a deeper audit that evaluates whether each automation layer is producing the value it was designed to produce or whether it has drifted. The specific questions to ask in a quarterly review are: which automated content is getting indexed and ranking, which automated technical fixes have been applied most frequently, and whether the alert thresholds are catching genuine issues or generating noise.

SEO automation done well is one of the highest-leverage investments a marketing team can make. But leverage cuts both ways. The same systems that scale your wins scale your mistakes at equal speed. The teams that win long-term are the ones who build automation that is monitored, maintained, and improved based on what it actually produces rather than what it was assumed to produce when it was set up.

Stop Guessing. Start Tracking What Your Automation Actually Produces.

FluxSERP connects your rankings, AI Overview citations, technical health, and competitor data into one dashboard so your automation always has the right inputs.

Start for Free

Catalin Dinca

Written by Catalin Dinca